Summary:

Understanding HIPAA compliant app development is crucial for any healthcare business handling sensitive patient data. This blog explores HIPAA regulations, the types of protected information, and why compliance is essential. It also outlines key security features, development processes, common challenges, and cost considerations. Whether you’re a startup, healthcare provider, or SaaS platform, this guide helps you build secure, compliant applications while reducing legal risks and strengthening patient trust.

Today, many people use mobile apps to manage their health, from booking appointments to checking reports. With this growing use, protecting patient data has become essential. A HIPAA compliant app helps ensure that sensitive information stays safe. In this blog, we’ll break down what HIPAA compliance means, why it matters, and how to build an app that follows these rules.

What is HIPAA Compliance in Healthcare Apps?

HIPAA, short for the Health Insurance Portability and Accountability Act, is a U.S. law designed to protect patient privacy and secure sensitive health information. When we say an app is “HIPAA compliant,” it means it adheres to all the established standards to keep patient data safe and sound.

Who needs to follow it?

HIPAA compliance isn’t just for big healthcare organizations; it applies to a range of players in the field. Here’s a breakdown:

- Healthcare Providers: Doctors, clinics, hospitals, and any other providers handling patient health information need to comply.

- Startups: New companies venturing into the healthcare space must prioritize compliance right from the start.

- Saas Platforms: Software that provides healthcare solutions is expected to follow HIPAA guidelines.

- Developers: Anyone developing apps that will handle patient data is responsible for ensuring compliance.

What kind of data is protected

Protected Health Information(PHI): includes any personal details that could identify a patient. This can be:

- Names

- Email addresses

- Health records

- Billing information

- Appointments

Grasping what data is under protection is crucial for any organization dealing with health information.

Why HIPAA Compliance is Essential for Healthcare Mobile Apps

As healthcare apps proliferate, so do the risks of data breaches involving patient information.

Rising use of Healthcare Apps

With more patients turning to apps for everything from booking appointments to accessing their medical records, the call for stringent security measures has never been louder. Non-compliance not only puts data at risk but could also lead to expensive legal issues.

Legal consequences of non-compliance

The penalties for not adhering to HIPAA regulations can be quite severe. Organizations may face:

Fines: Ranging anywhere from hundreds to millions of dollars.

Lawsuits: Legal battles can drain a healthcare provider’s financial resources.

Trust Factor

Patients genuinely care about their privacy. Knowing that their data is secure fosters trust in their healthcare provider. For providers, building this trust translates directly into patient loyalty and engagement.

Business Impact

Failure to comply can tarnish a healthcare provider’s reputation and obstruct vital partnerships, which ultimately stifles growth and scalability.

What Data Does HIPAA Protect in Healthcare Applications?

HIPAA zeroes in on data characterized as Protected Health Information (PHI). This includes:

Types of Protected Health Information (PHI)

- Health records: Medical history and treatment details.

- Billing Information: Payment history and insurance specifics.

- Appointment details: Dates, reasons for visits, and any cancellations.

Examples in Real Apps

Take, for example, a telemedicine app this kind of app might gather and retain patient records, appointment logs, and billing information, all of which must be kept secure and compliant with HIPAA standards.

Where this data exists

Data can find a home in various places, including:

- Mobile apps

- Cloud servers

- APIs

Understanding where your data resides helps clarify compliance needs.

When an app becomes “HIPAA applicable”

Any app that collects, stores, or transmits PHI falls under the umbrella of HIPAA regulations. Even applications intended for more straightforward tasks must ensure compliance if they handle any protected information.

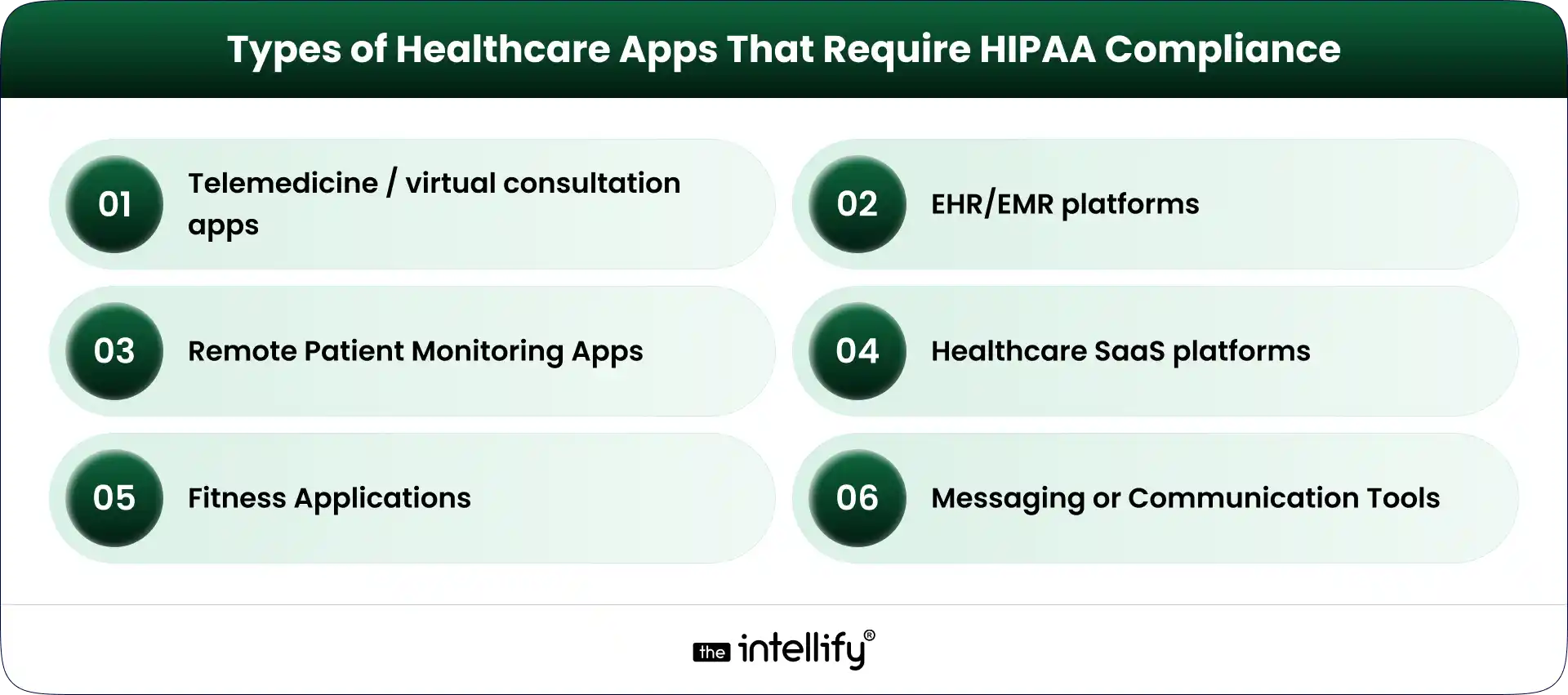

Types of Healthcare Apps That Require HIPAA Compliance

Certain healthcare applications absolutely need to prioritize compliance:

Telemedicine / virtual consultation apps

These platforms manage sensitive patient data during virtual visits, making robust security essential.

EHR/EMR platforms

Electronic Health Records (EHR) and Electronic Medical Records (EMR) systems must comply with HIPAA, as they contain extensive patient data.

Remote Patient Monitoring Apps

Apps that track patient health metrics routinely collect and manage PHI.

Healthcare SaaS platforms

Software-as-a-Service solutions that assist healthcare providers must make sure they follow HIPAA rules too.

Fitness Apps

When these apps start collecting sensitive health information, they also need to be compliant.

Messaging or Communication Tools

Any app utilized in the delivery of patient care, like chat or messaging tools, should keep compliance in focus.

Understanding HIPAA Rules That Impact App Development

To comply with HIPAA, it’s vital to grasp the key rules that guide app development:

- Privacy Rule: This rule outlines who can access patient data and under what conditions.

- Security Rule: It specifies how to protect data digitally, including aspects like data encryption and access controls.

- Breach Notification Rule: Organizations must notify affected parties promptly if there’s any data exposure.

- Omnibus Rule: The Omnibus rule extends responsibilities to business associates, meaning data vendors also need to ensure compliance.

Understanding these rules is crucial for developers aiming to craft compliant applications.

HIPAA Safeguards Every Healthcare App Must Follow

When developing a HIPAA compliant app, you need to implement several key safeguards:

- Administrative safeguards: These encompass policies regarding data access, staff training, and regular compliance assessments.

- Physical safeguards: It’s vital to keep devices and data centers secure from unauthorized access.

- Technical safeguards: Technical measures like encryption, authentication protocols, and activity monitoring must be put in place.

Essential Features of a HIPAA Compliant App

To stay compliant, your app should incorporate these essential features:

1. End-to-end data encryption: Protects data during both transmission and storage.

2. Secure login & multi-factor authentication: Adds an extra layer of user security.

3. Role-based access control: Guarantees that only authorized personnel can access PHI.

4. Audit logs & activity tracking: Monitors who access data and when.

5. Secure data storage & backups: Shields against potential data loss.

6. API security & third-party integrations: Protects data shared with other applications.

7. Session timeouts and automatic logouts: Prevent unauthorized access if users leave the app open.

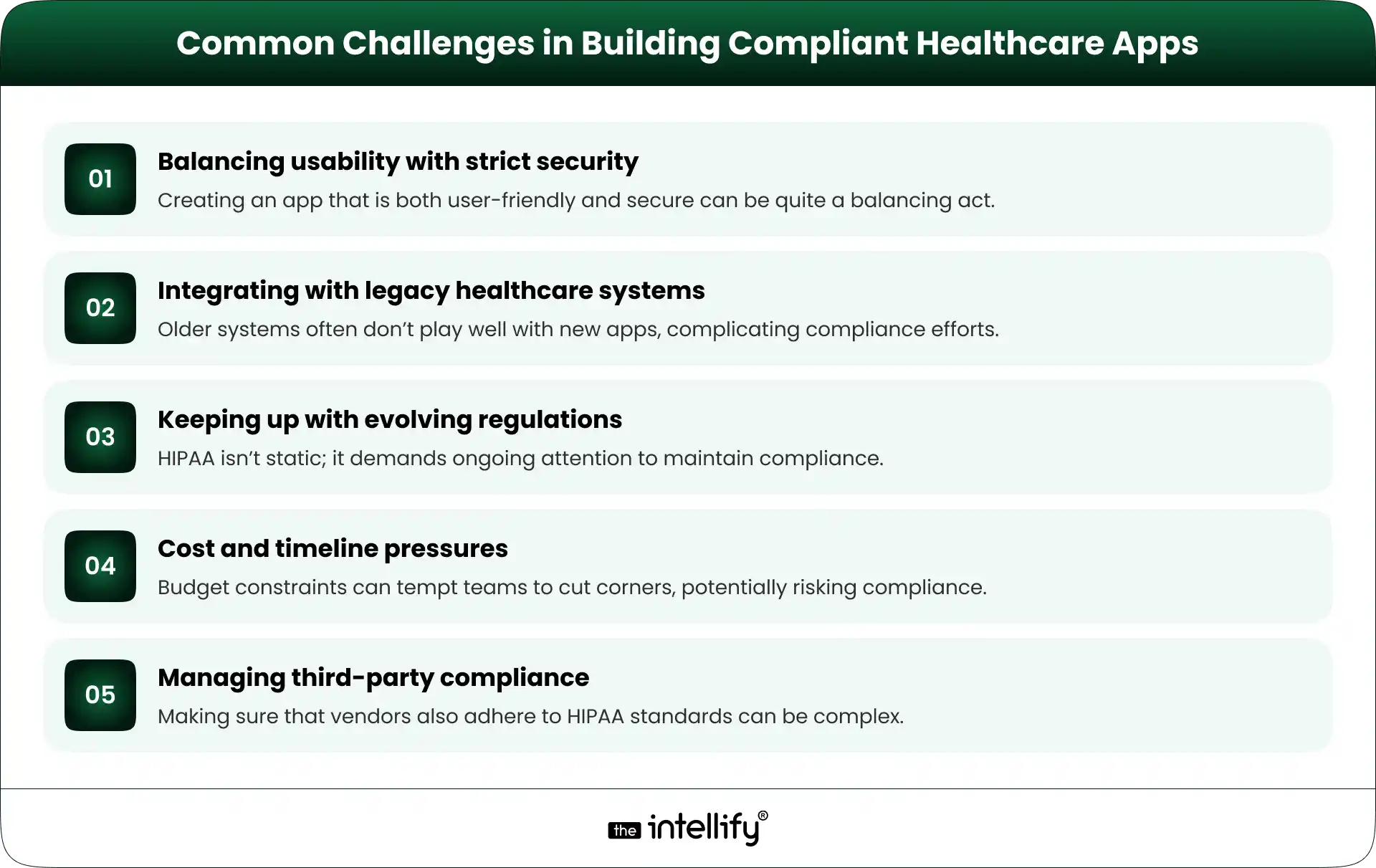

HIPAA Compliant App Development: Step-by-Step Process

HIPAA compliant healthcare mobile app development involves several key steps:

- Requirement gathering with compliance in mind: Start by clearly outlining what data your app will handle and which HIPAA standards apply.

- Risk assessment & planning: Evaluate potential risks to data security and map out your compliance strategy.

- UI/UX design with privacy-first approach: Design your app with user privacy in focus, making data protection features easy to access.

- Secure development practices: Embed security measures throughout the development cycle to minimize risks.

- Testing (security + compliance validation): Execute thorough testing to ensure the app is both secure and compliant.

- Deployment on compliant infrastructure: Utilize hosting solutions that meet HIPAA compliance standards for data protection.

- Ongoing monitoring & updates: Continuously watch for vulnerabilities and make updates to keep your app compliant.

Key Security Requirements for HIPAA Compliant Mobile Apps

Key security elements to include are:

- Data encryption: Protect data at rest and during transit.

- Secure cloud: Opt for HIPAA-ready hosting solutions.

- Access control systems: Implement strong access management protocols.

- Data integrity protection: Guarantee that data remains accurate and unaltered.

- Regular vulnerability testing: Identify and address any potential threats.

- Business Associate Agreements (BAAs): Confirm third-party vendors also comply with HIPAA.

Cost Factors of HIPAA Compliant App Development

Various elements can influence the cost of developing a HIPAA compliant app:

1. Complexity of features: More intricate features generally lead to higher development costs.

2. Security Implementation Level: Investing in robust security measures can be costly but is essential.

3. Integration Requirements: Connecting with EHR systems or APIs can significantly bump up expenses.

4. Compliance Audits & Testing: Ensuring compliance through stringent audits can add to the overall cost.

5. Maintenance and updates: Keeping up with ongoing compliance requirements will also need budgetary consideration.

Cutting costs in these areas may lead to dangerous compromises in data security.

How to Choose the Right HIPAA Compliant App Development Partner

Choosing the right partner for app development is critical:

- Proven healthcare experience: Look for partners who have a solid track record in healthcare.

- Understanding of HIPAA regulations: Make sure they genuinely understand HIPAA guidelines.

- Security-first development approach: Select partners who prioritize security during the development process.

- Ability to sign BAAs: Ensure they’re willing and capable of signing Business Associate Agreements.

- Portfolio of compliant apps: Review their past work for examples of HIPAA compliant applications.

- Long-term support & scalability: Look for partners who can support your app as it grows and as regulations evolve.

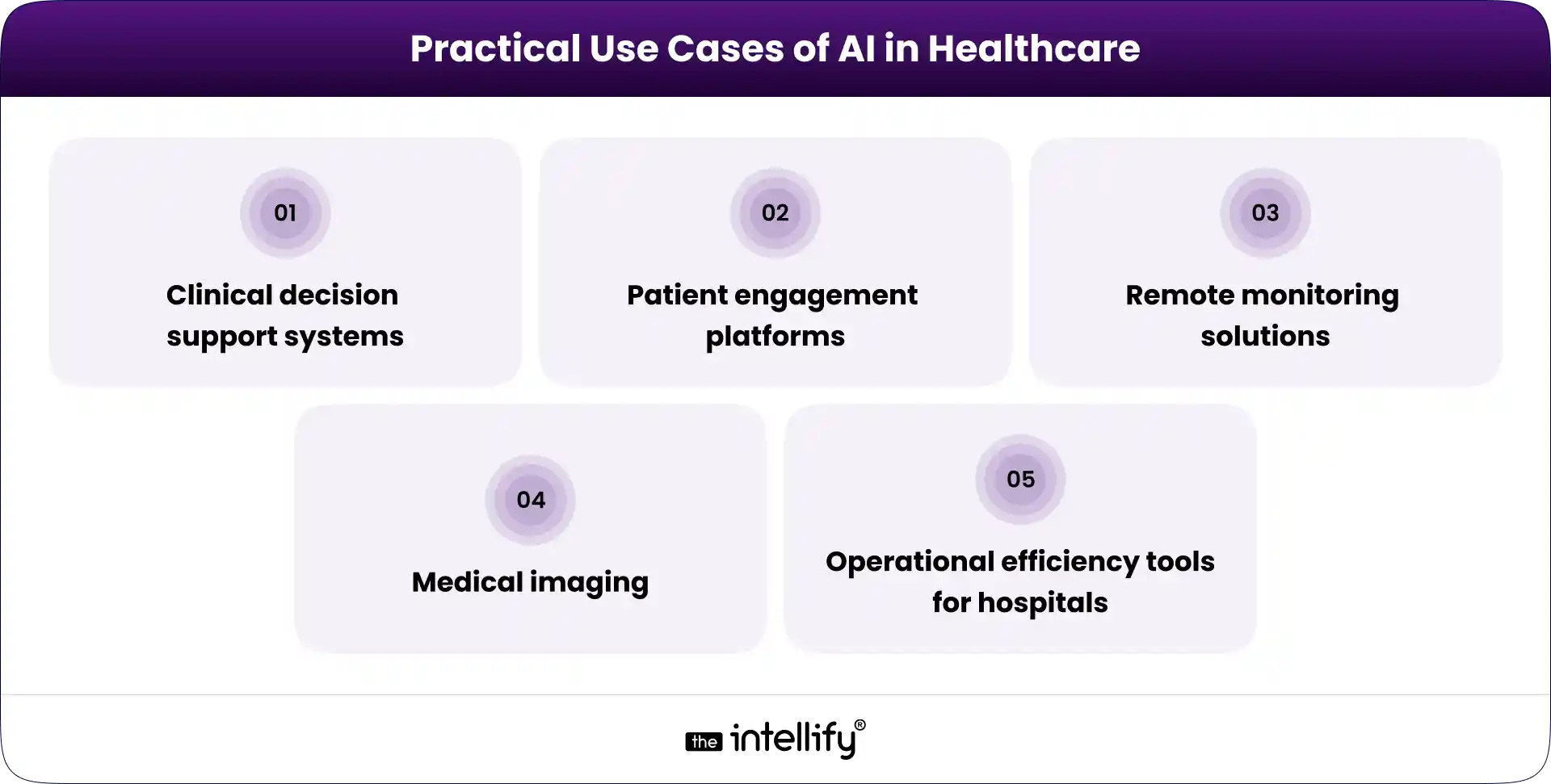

Future Trends in Healthcare App Development

The future of healthcare app development is bright and continuously changing. Here are some key trends to watch:

- AI in healthcare: While AI holds immense potential, it also brings along compliance challenges that developers must navigate.

- Remote care & wearable integrations: The need for remote care solutions keeps climbing, necessitating regular compliance checks.

- Cloud-native secure healthcare platforms: Expect a shift towards cloud-native solutions that prioritize security and data management.

- Growing focus on patient-controlled data: Patients are gaining more control over their health data, making compliance even more crucial.

- Increasing audits and stricter enforcement: Regulatory bodies are upping the frequency of audits, demanding a robust focus on compliance.

Final Thoughts

In closing, grasping the nuances of HIPAA compliant app development is vital for any organization involved in healthcare. Ignoring these regulations can lead to considerable legal and financial repercussions. By prioritizing compliance from the get-go, you’re paving the way for long-term success and trust.

If you’re contemplating developing a HIPAA-compliant app, feel free to reach out to us at The Intellify for expert guidance and tailored solutions. We’re here to help you navigate the complexities of healthcare app development, ensuring you deliver secure and compliant applications.

Frequently asked questions (FAQs)

1. Do all healthcare apps need HIPAA compliance?

Not every app needs it. If your app handles patient health data like reports, prescriptions, or consultations, then HIPAA rules apply. Apps that only track general fitness without medical data usually don’t require it.

2. What does it really mean for an app to be HIPAA compliant?

It means the app is built to keep patient data safe at every stage, whether it’s stored, shared, or accessed. This includes encryption, secure logins, and limiting access to sensitive information.

3. Can I make my app compliant after launching it?

You can, but it’s not ideal. Fixing compliance later often requires reworking core parts of the app, which increases time and cost. It’s much easier to plan for it from the beginning.

4. What are the most common mistakes in compliant app development?

Common mistakes include weak encryption, poor access control, and using third-party tools that aren’t secure. Even small gaps can lead to serious data risks if not handled properly.

5. How long does it take to build a compliant healthcare app?

It depends on the app’s complexity. Compliance adds extra time for planning, security setup, and testing, but it helps avoid bigger issues after launch.

6. Do third-party tools (like chat, analytics, or APIs) affect compliance?

Yes, they do. If these tools handle patient data, they must also comply with applicable requirements. You’ll also need proper agreements to ensure data is handled securely.

7. What’s the difference between a secure app and a HIPAA-compliant app?

A secure app focuses on protecting data technically, while HIPAA compliance also includes legal rules and how data is managed. It’s a broader approach that goes beyond just security.